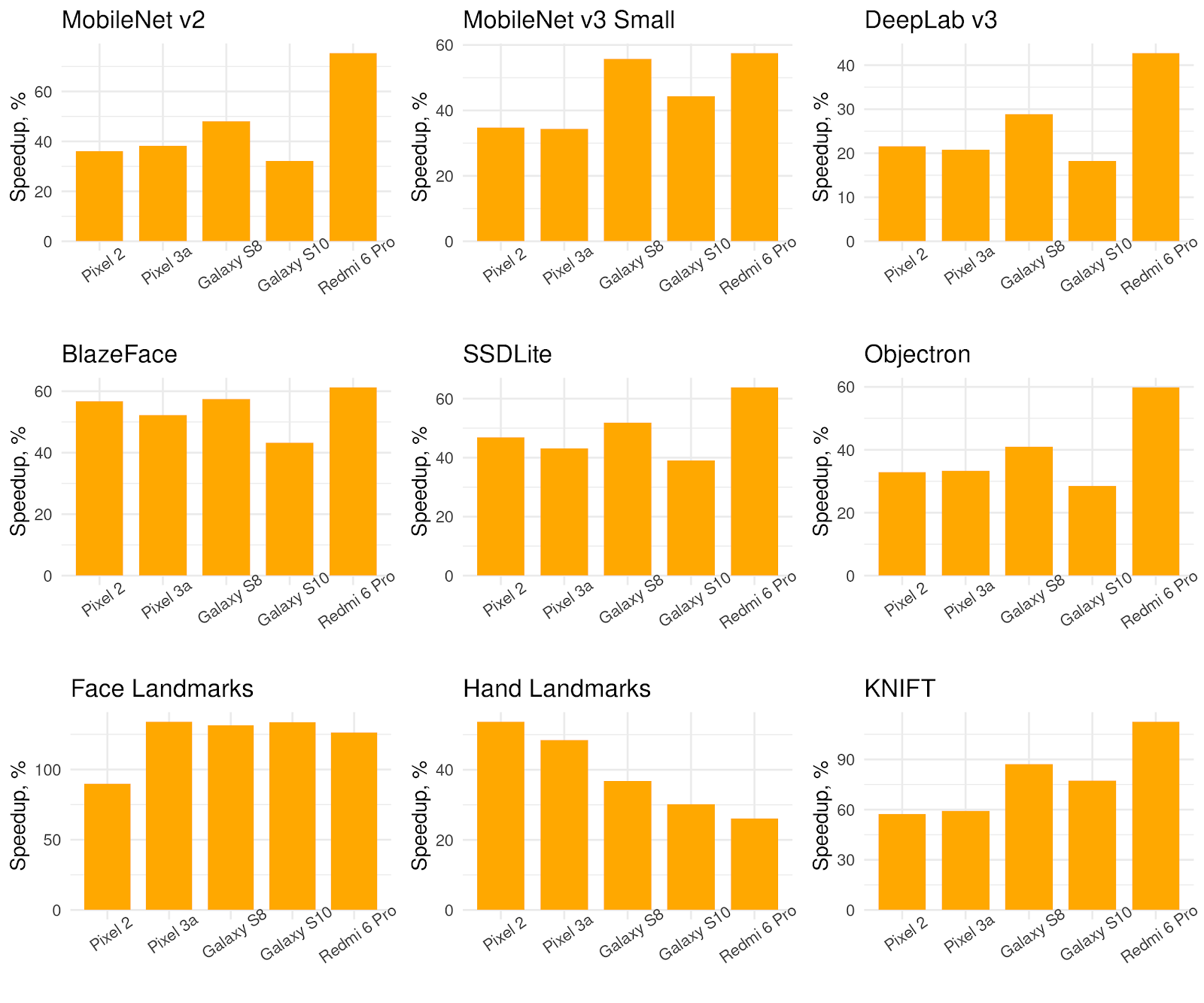

XNNPack and TensorFlow Lite now support efficient inference of sparse networks. Researchers demonstrate… | Inference, Matrix multiplication, Machine learning models

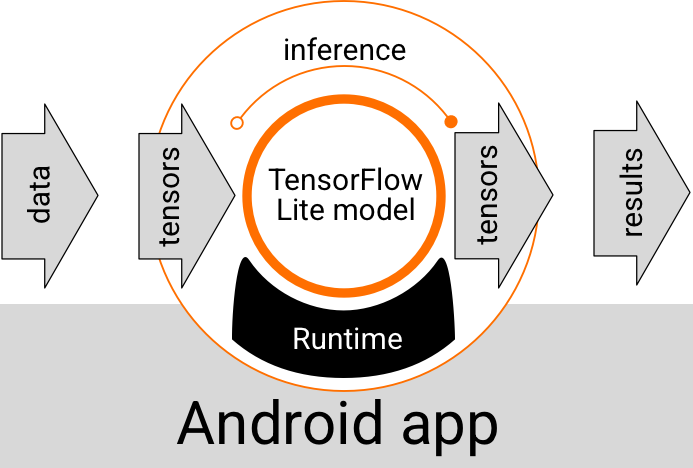

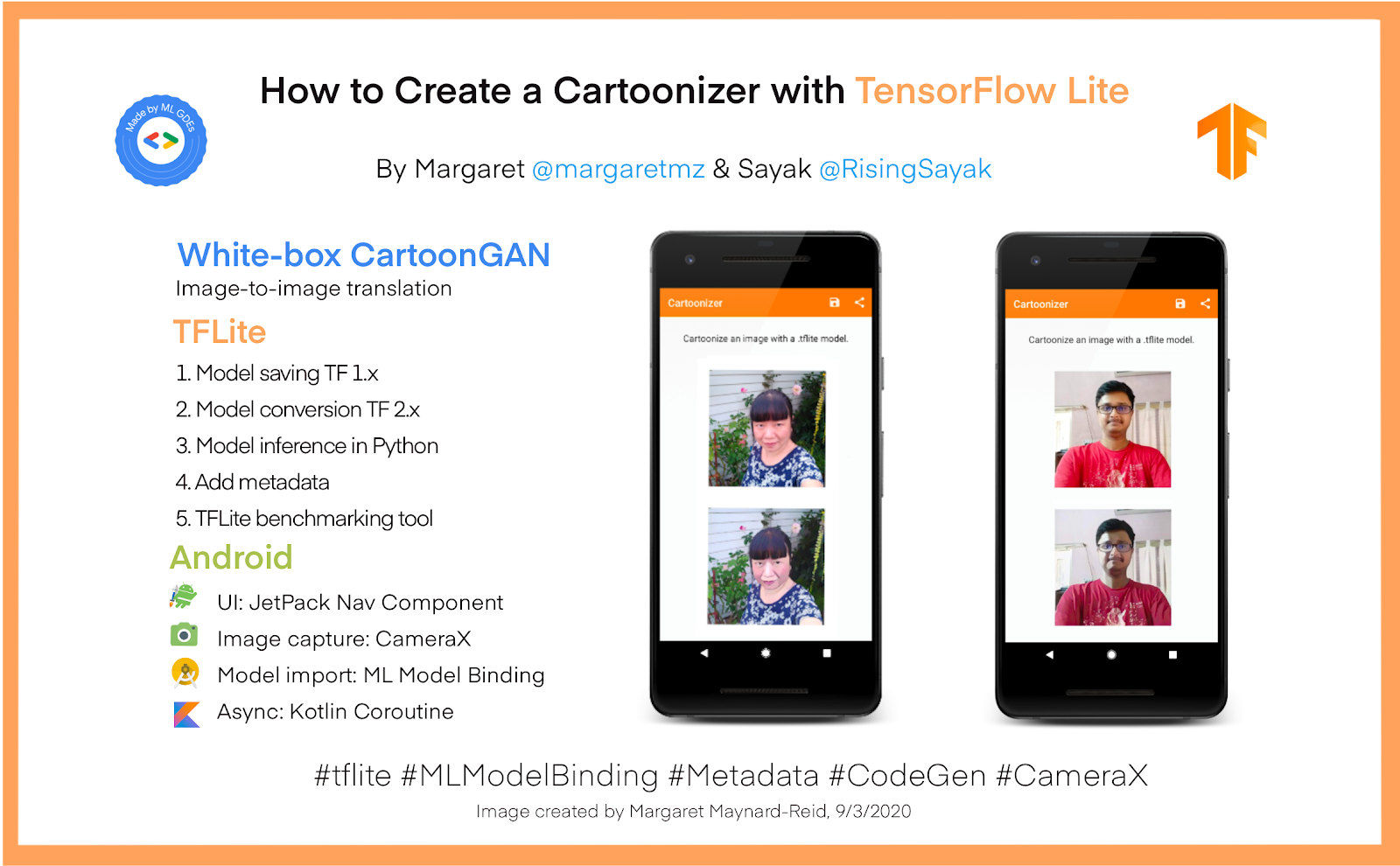

GitHub - dailystudio/tflite-run-inference-with-metadata: This repostiory illustrates three approches of using TensorFlow Lite models with metadata on Android platforms.

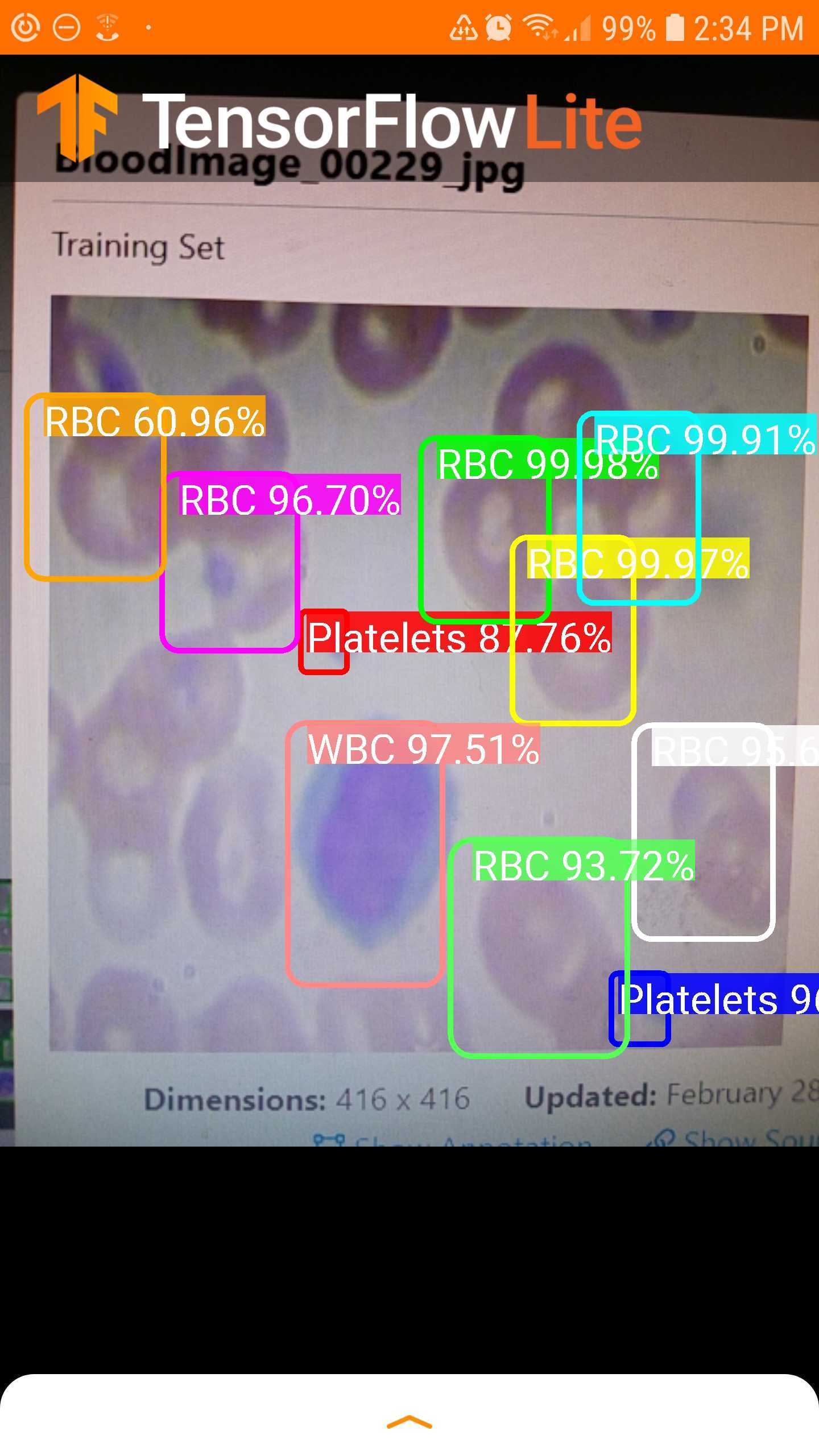

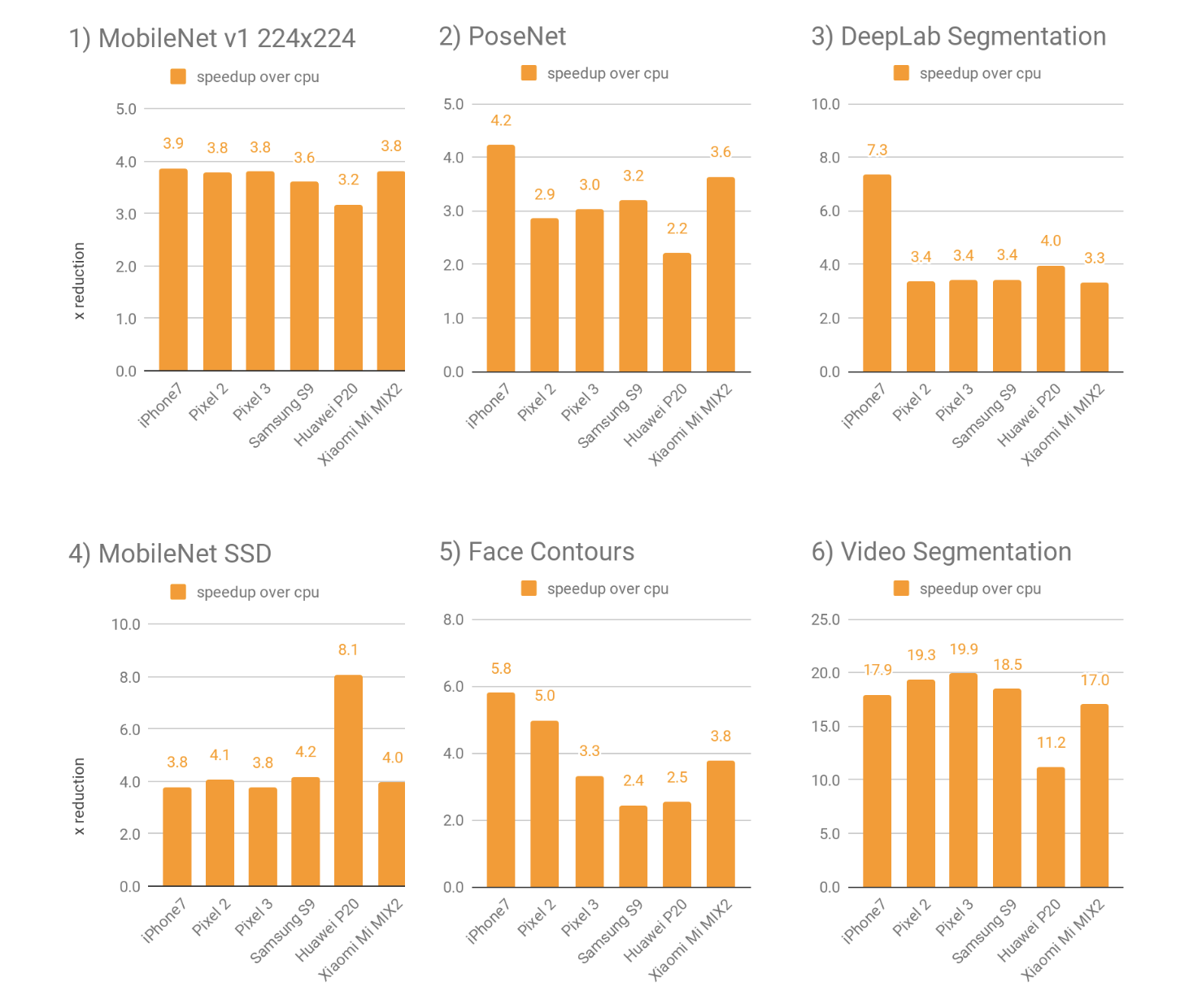

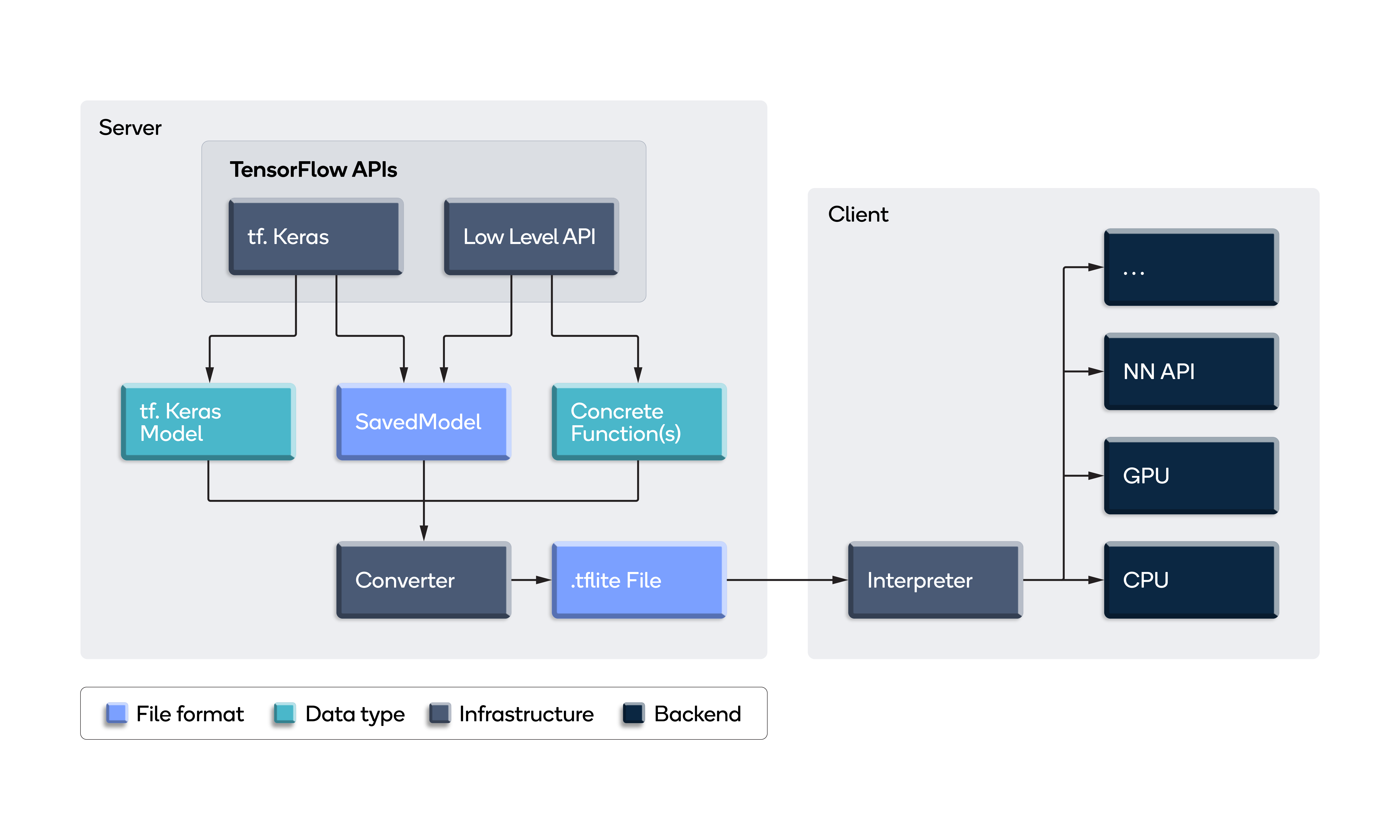

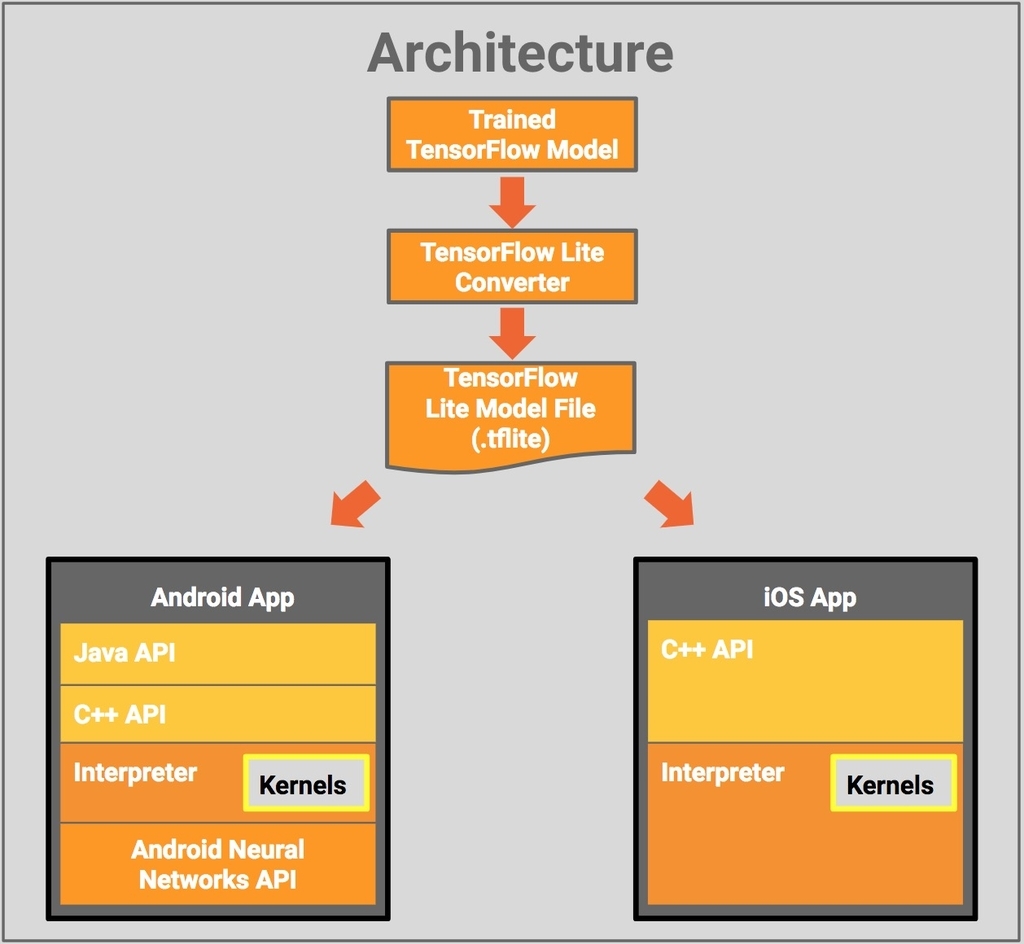

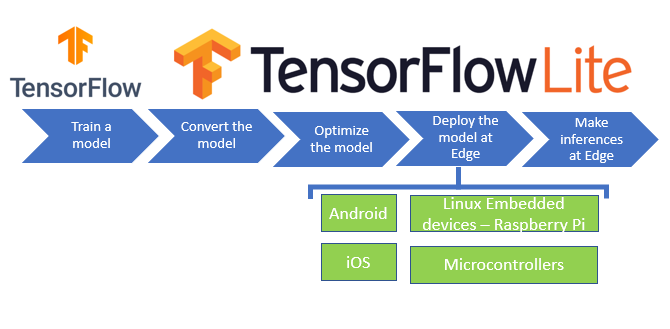

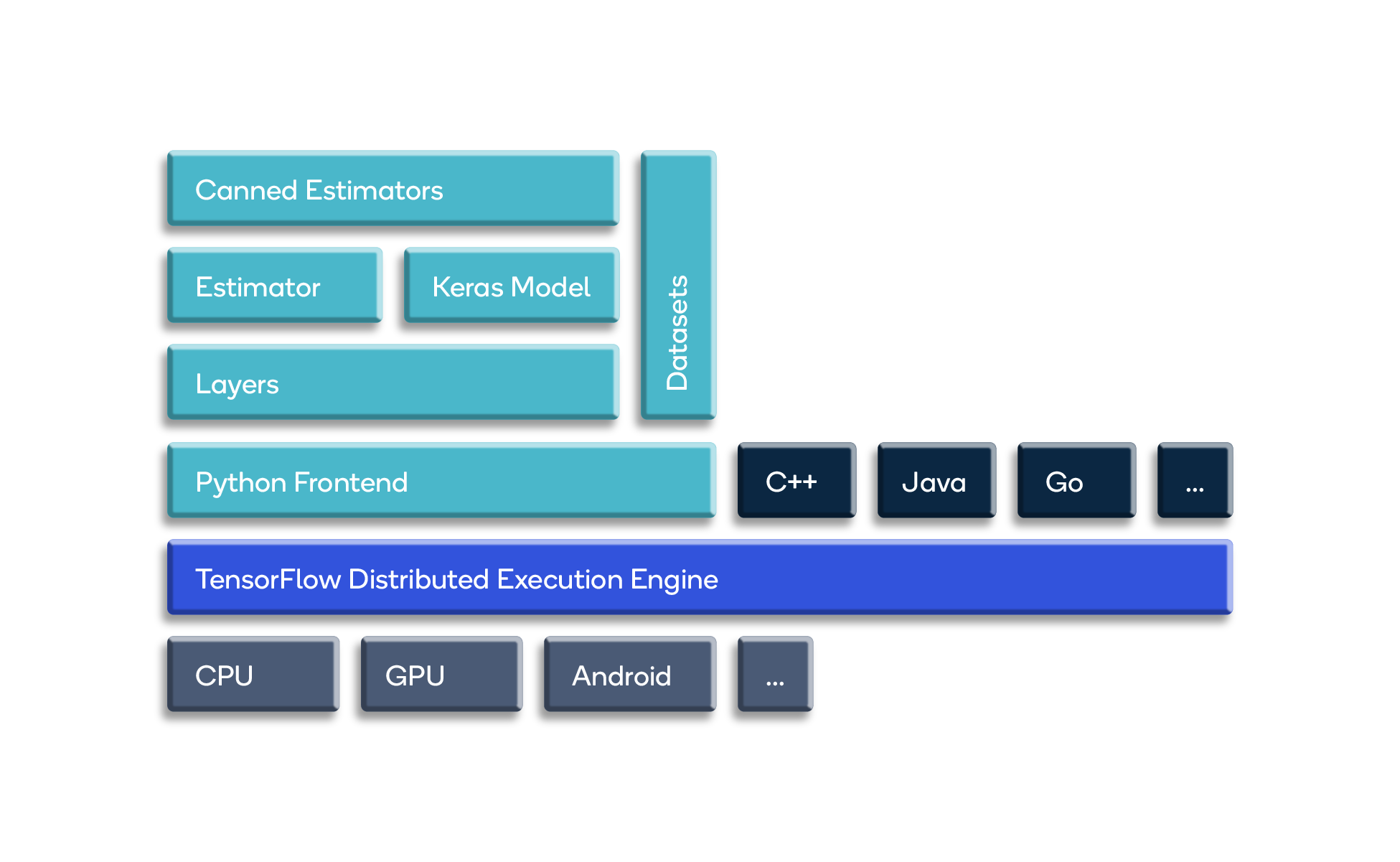

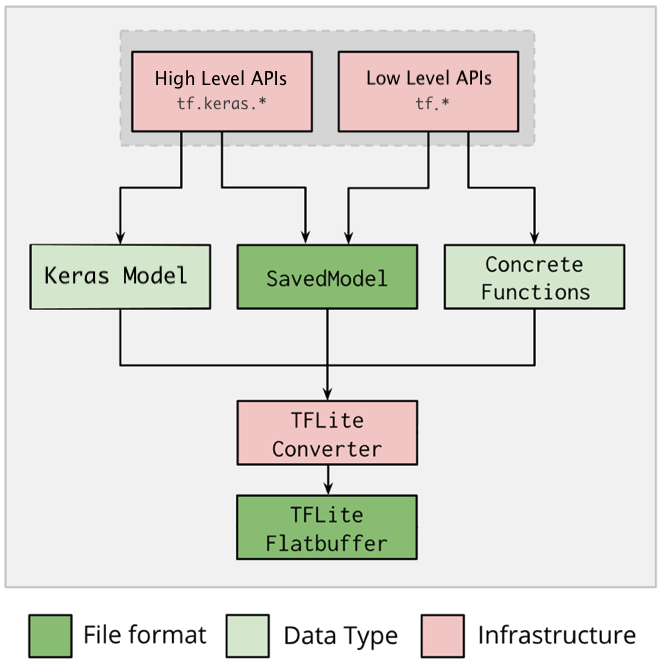

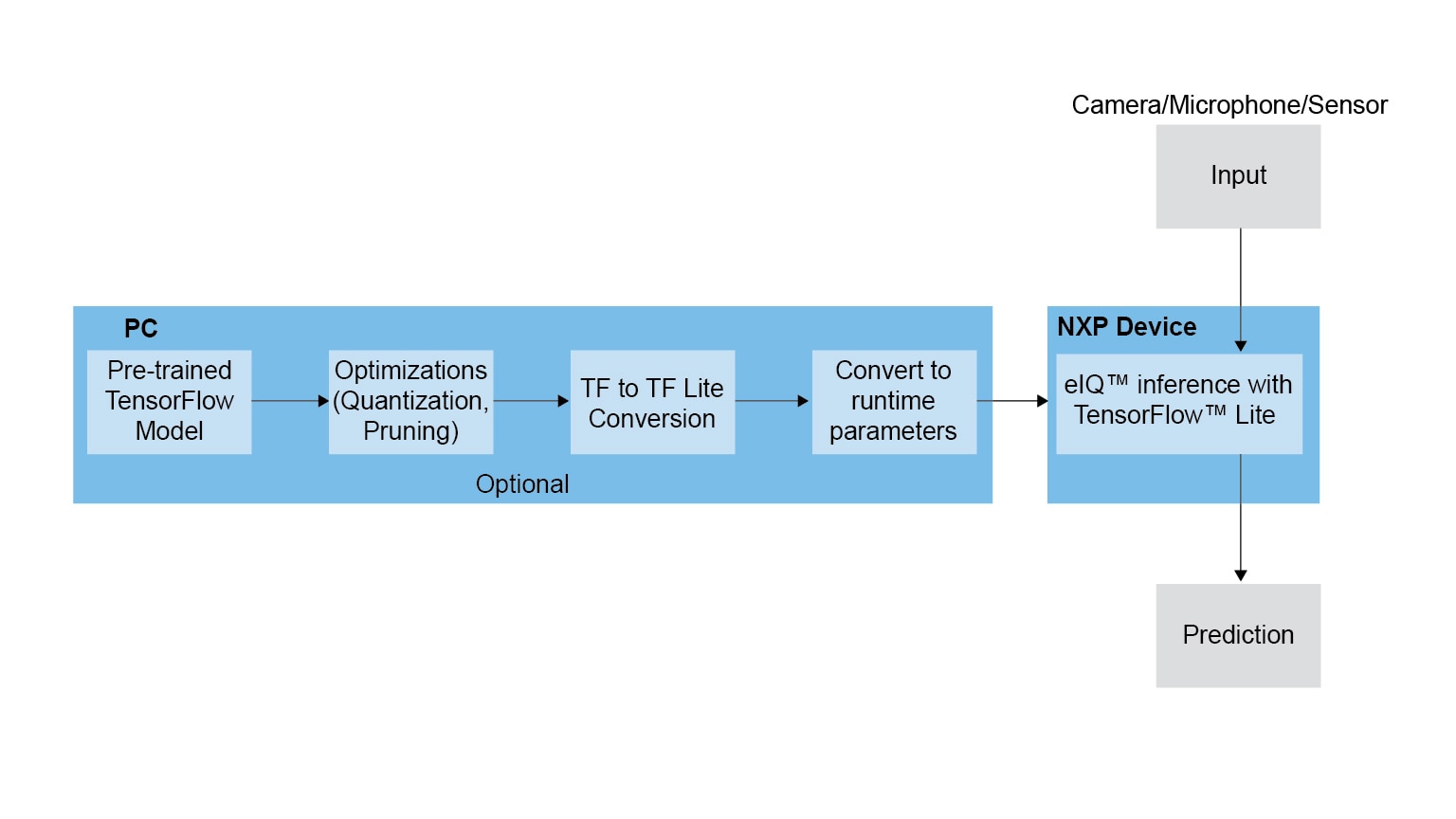

Everything about TensorFlow Lite and start deploying your machine learning model - Latest Open Tech From Seeed

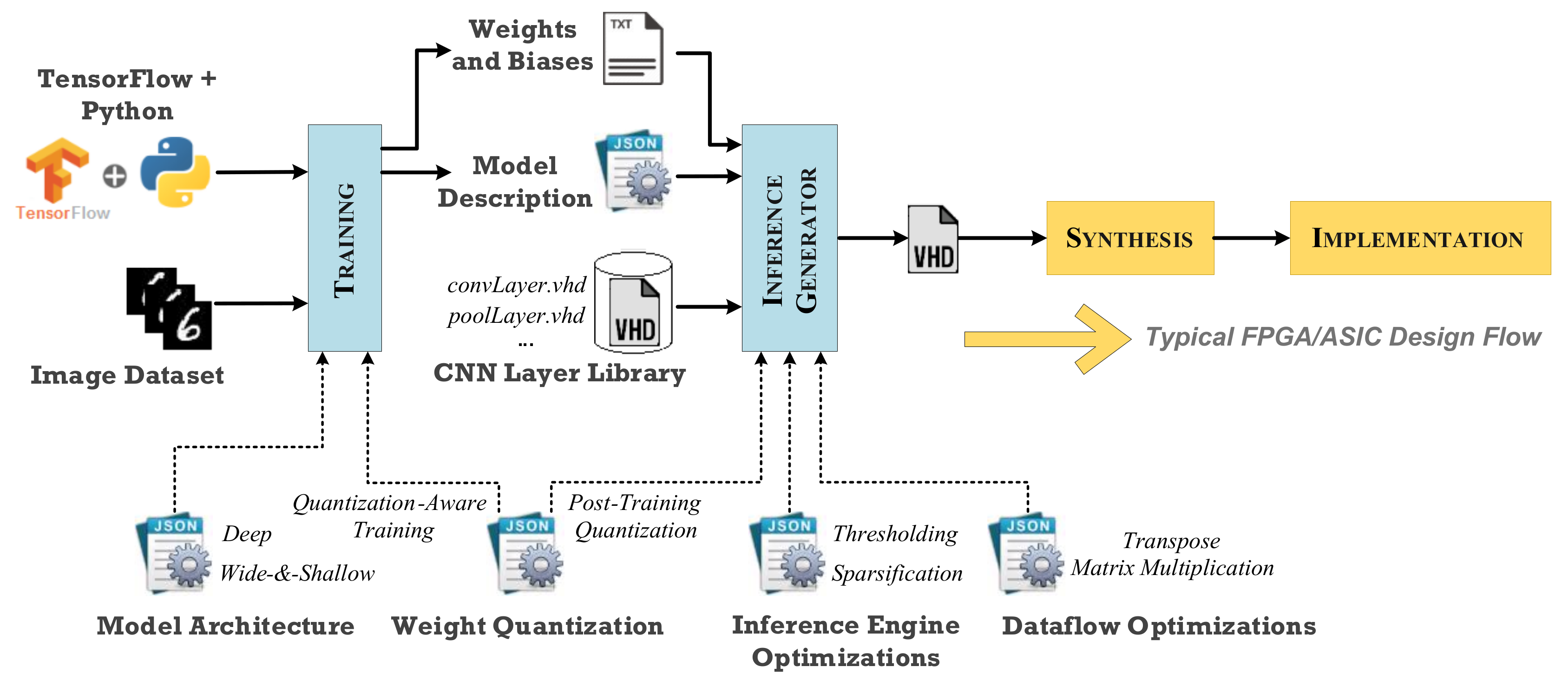

Technologies | Free Full-Text | A TensorFlow Extension Framework for Optimized Generation of Hardware CNN Inference Engines